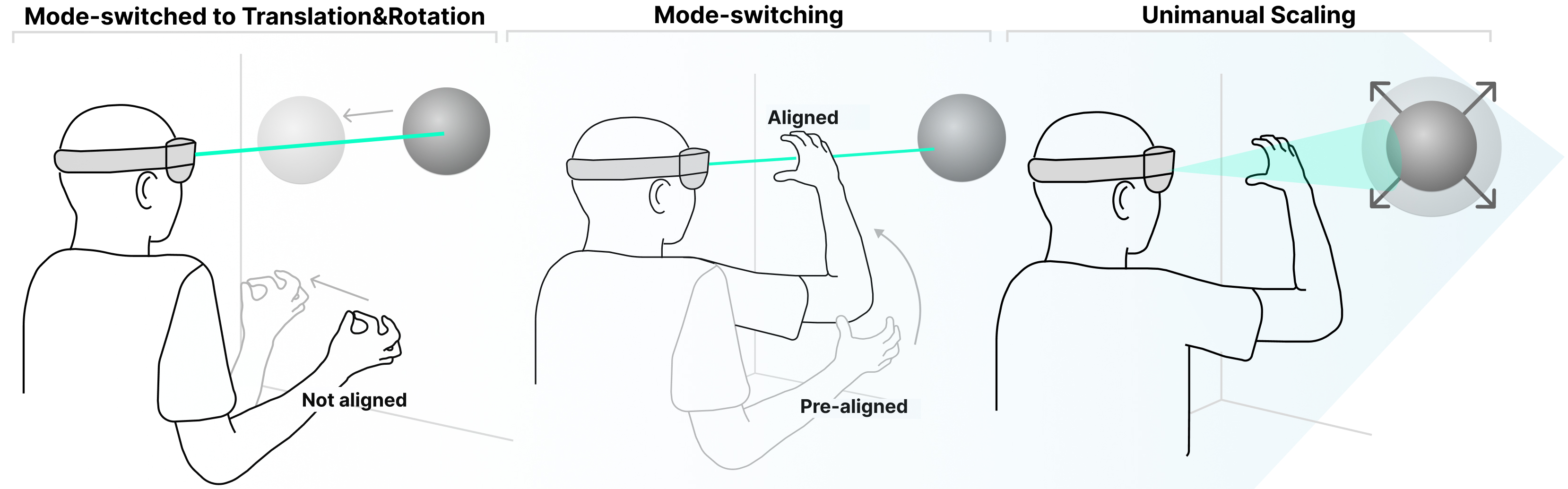

As extended reality (XR) technologies rapidly become as ubiquitous as today's mobile devices, supporting one-handed interaction becomes essential for XR. However, the prevalent Gaze + Pinch interaction model partially supports unimanual interaction, where users select, move, and rotate objects with one hand, but scaling typically requires both hands. In this work, we leverage the spatial alignment between gaze and hand as a mode switch to enable single-handed pinch-to-scale. We design and evaluate several techniques geared for one-handed scaling and assess their usability in a compound translate-scale task. Our findings show that all proposed methods effectively enable one-handed scaling, but each method offers distinct advantages and trade-offs. To this end, we derive design guidelines to support futuristic 3D interfaces with unimanual interaction. Our work helps make eye-hand 3D interaction in XR more mobile, flexible, and accessible.

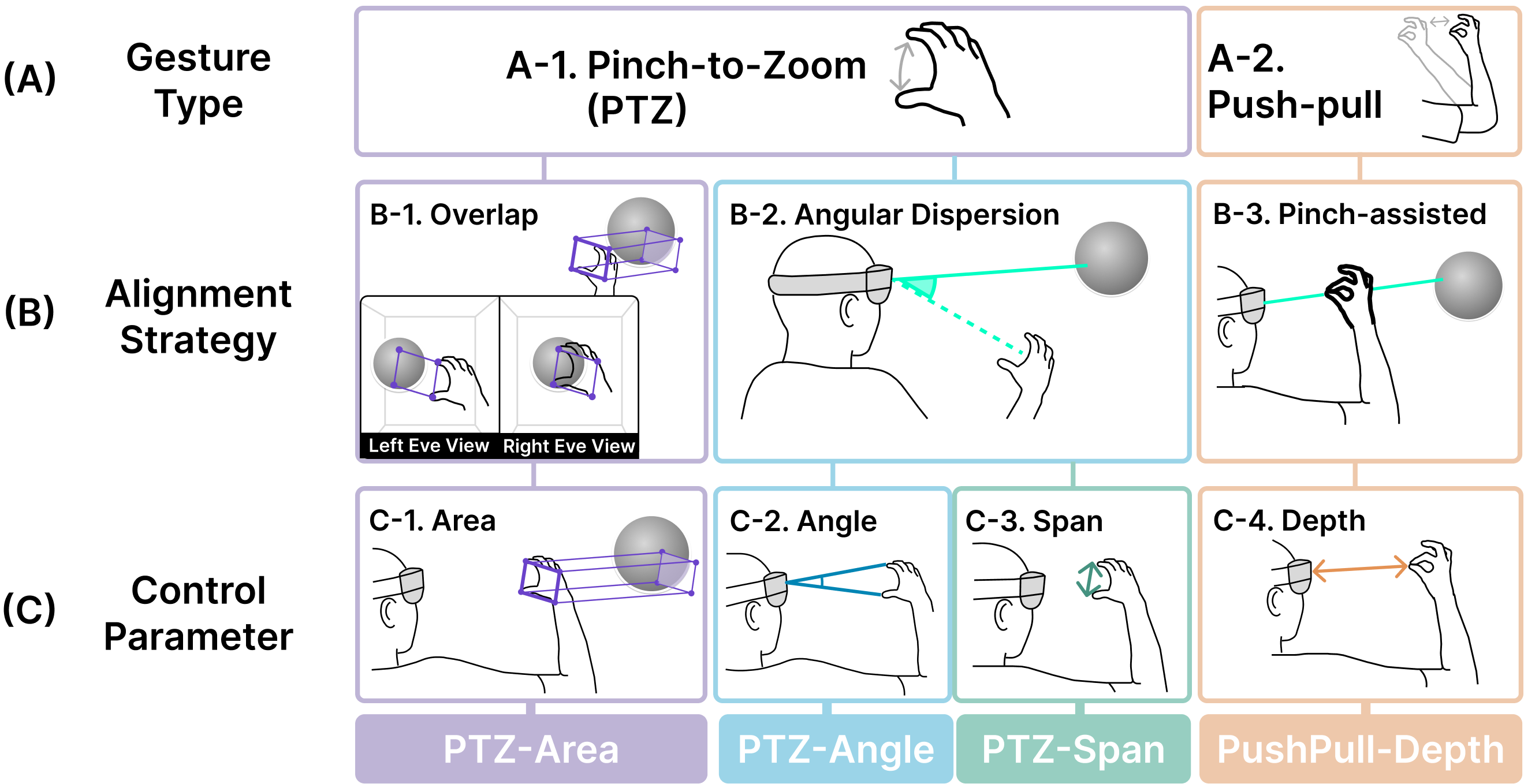

Our design space of unimanual scaling interactions consists of gesture type (A), alignment strategy (B), and control parameters (C), deriving four interactions of PTZ-Area, -Angle, -Span, and Push-Pull-Deth scaling.

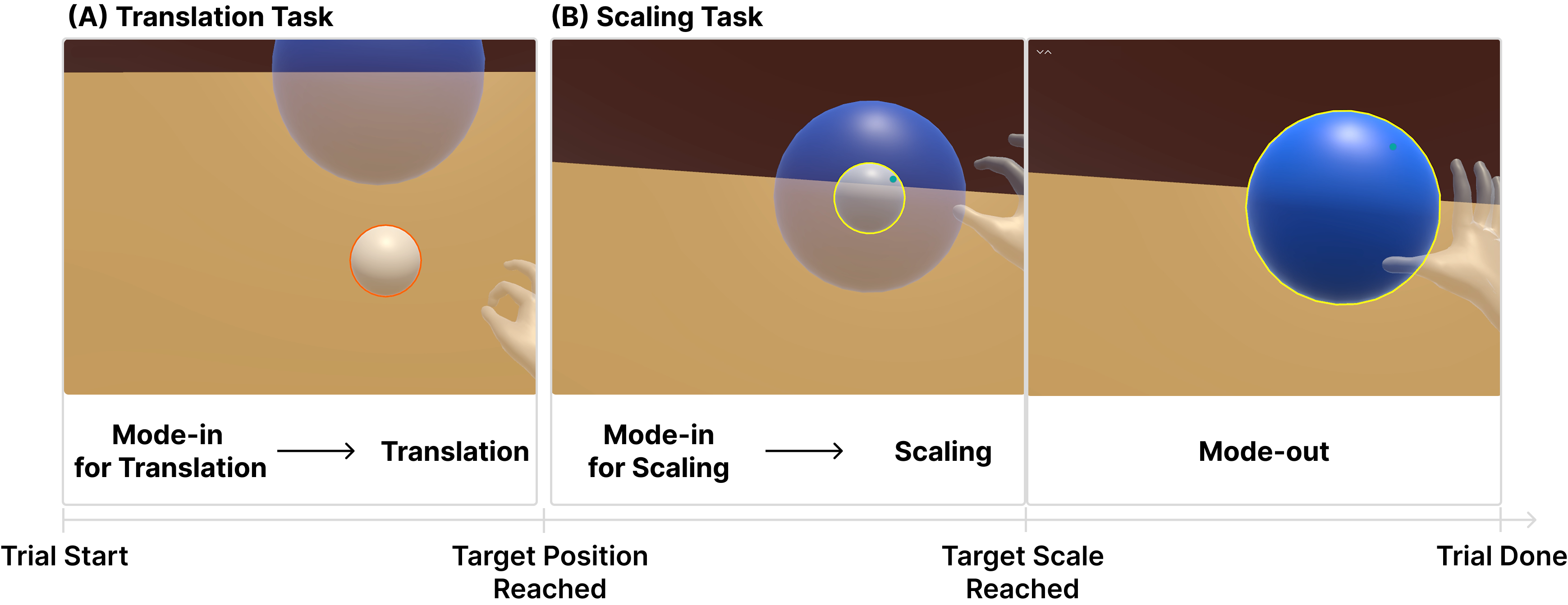

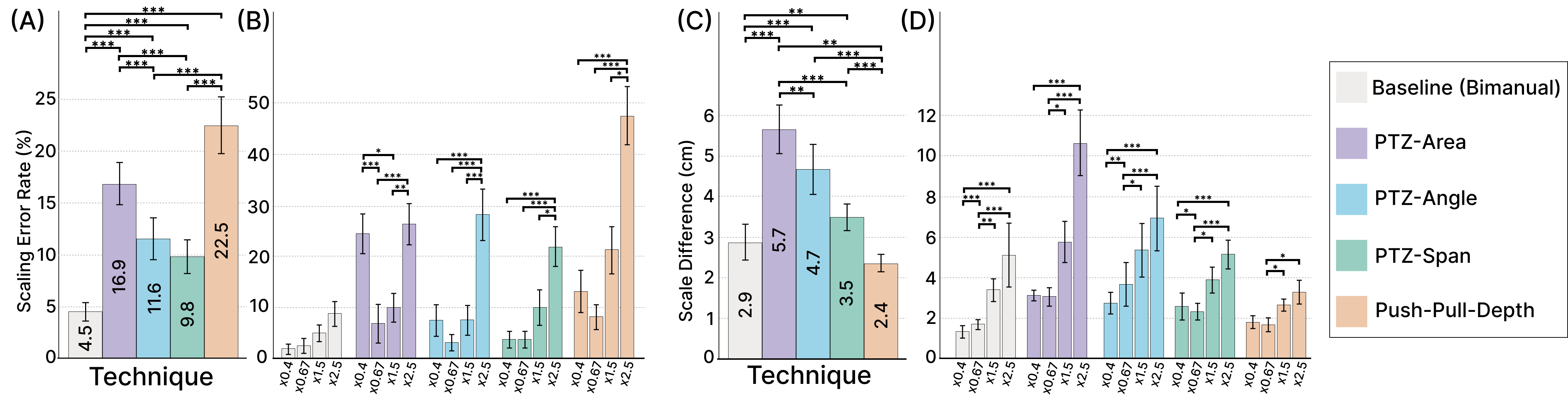

We present a user study evaluating the techniques in a compound translation-scaling task.

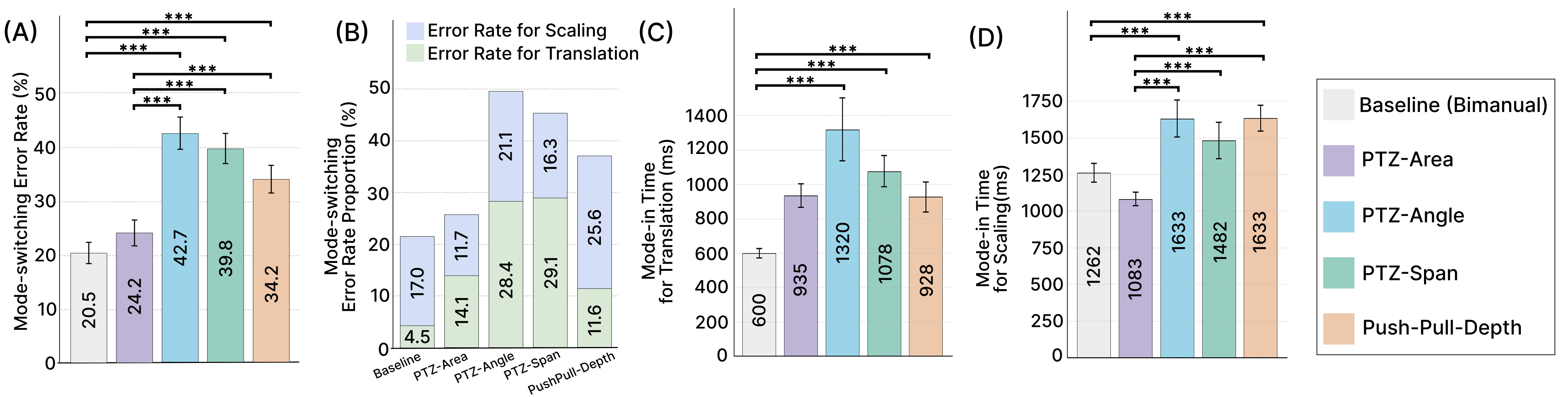

Among the unimanual techniques, PTZ-Area showed the most robust mode-switching performance. While our unimanual techniques did not outperform the bimanual, our purpose is not to beat the bimanual, but to provide a necessary unimanual alternative.

Push-pull scaling achieved a scaling precision comparable to the bimanual baseline. Our results revealed trade-offs for each technique, leading to our guidelines informing the techniques' suitability for practical use cases.